Oct 25 2023

Aug 15 2023

Resource

BlogTopic

Edge ComputeDate

Jun 28, 2023We’re excited to announce a new capability that involves three of our favorite things: Kubernetes (K8s), edge computing, and multi-cloud. (Hang on—this isn’t cloud buzzword Bingo, we promise.)

StackPath Edge Compute now supports Virtual Kubelet (VK) for use with StackPath Containers (SP// Containers).

That means developers/operators that need the flexibility of a K8s cluster—whether it’s deployed on-premises, in a hyperscale cloud, across multiple clouds, or a mix of all the above—can seamlessly integrate SP// Containers into their clusters to deploy worker nodes with super low latency between the node and external sources/destinations of data.

And, with the power of VK, adding our edge compute to their clusters doesn’t mean adding complexity or another API/console to manage.

Just like the emergence of cloud computing drove the strategy to “design for failure,” edge computing is driving the need to “design for distributed data sources.” VK support in StackPath Edge Compute is just one way we’re making it easier to do just that.

Some details:

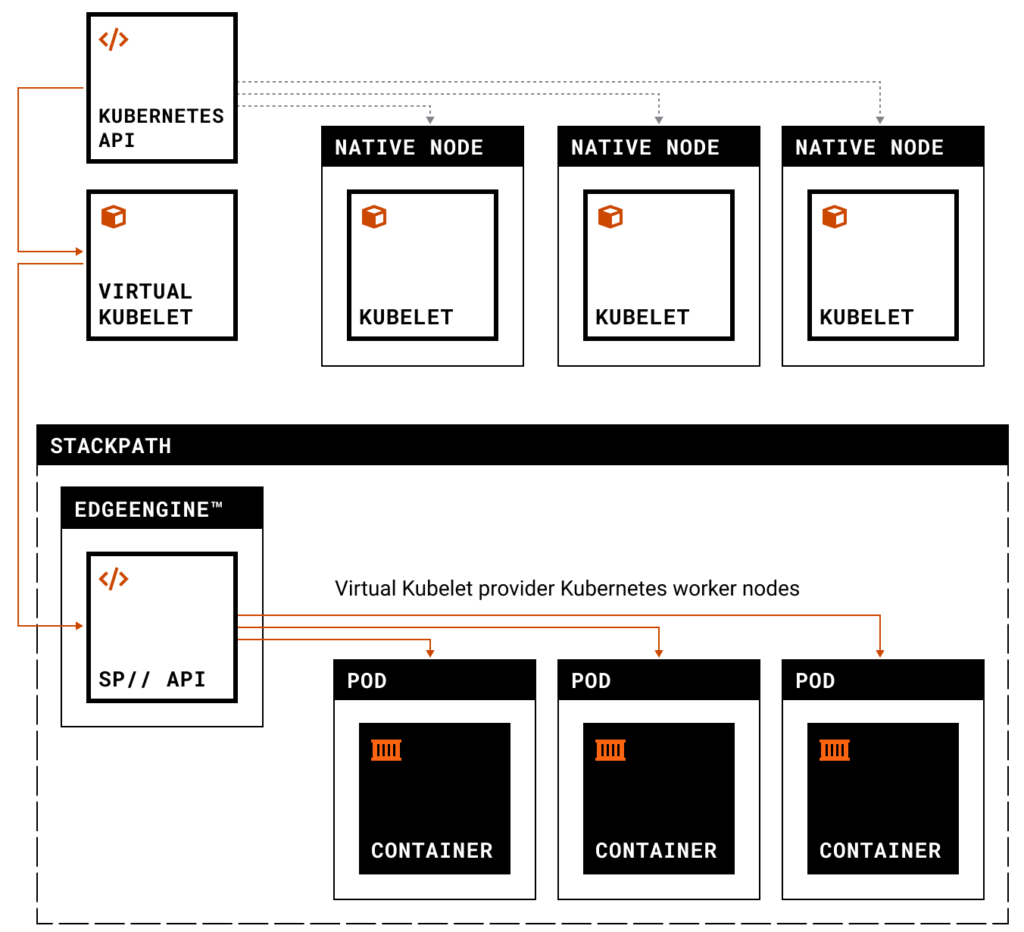

You probably know that a K8s cluster consists of a “control node” and multiple “worker nodes.” Control nodes include an API server. Traditionally, each worker node has a “Kubelet”—the agent that registers the worker node with the control node’s API server, communicates with the server, and actually applies, creates, updates, and destroys containers on the worker node.

Virtual Kubelet (VK) is a revolutionary open-source project and technology. VK installs into the control node API server, where it masquerades as the worker nodes’ Kubelets. The control node can then connect through VK to API servers for external computing platforms (e.g., StackPath Workload APIs) and seamlessly manage provisioning and controlling worker nodes on the platforms and their containers.

We’re proud to now be part of the VK community as an official Virtual Kubelet Provider. That means the rest of the community has certified our implementation of VK support meets or exceeds the project’s standards and practices. And we’re committed and enthusiastic to contribute to the project and its ongoing development and innovation.

Check out the complete “how to” details here. But, short story:

So, if you have a latency-sensitive workload and rely on K8s (or have wanted to), we’ve got the edge you’ve been looking for. No need to purchase, deploy, and manage additional hardware. No need to compromise proximity to data sources/destinations. Get low-latency compute in the exact metro area you desire without wasting resources. With SP// Edge Compute and VK support, you can focus on building your applications for performance, and we’ll supply the infrastructure and speed.