Introduction

The demand for real-time processing is increasing as digital workloads evolve and innovations emerge. However, real-time processing must pair with low-latency network connections for cloud-based workloads. Traditional cloud computing, while powerful, faces challenges in meeting the latency requirements of applications that deliver real-time experiences. Edge computing is emerging as the ideal infrastructure solution for such workloads. We describe these workloads and their latency-sensitivity and delve into critical characteristics of edge computing and the advantages it can provide.

What is Latency?

In computing, “latency” describes the time that elapses between initiating and completing a task. “Network latency” is the time that elapses while data travels through a network from its source to its destination.

There can be many causes of network latency. It can be caused by something as basic as low-quality network cables. Also, each time data packets pass through a different piece of hardware, such as a router or switch, time (and the risk of data corruption or blocking) is added to the packets’ trip. And the most fundamental cause of latency is simply the physical distance between the data’s source and destination and the unchangeable speed of light.

As alluded to above, the more extensive and complex a network, the more network latency is likely because there is room for more of those causes/variables. There is little network latency in a simple network (such as in a home or office), more for an extensive network (such as across a business’s campuses or locations), and an exponentially large mount for a world-spanning network of networks—the Internet—on which data must traverse countless network hops and different ISPs.

What are Latency-Sensitive Cloud Applications?

A latency-sensitive application is one for which a delay between data delivery (whether coming or going) and data processing impacts the success or quality of the application’s performance.

Primarily, an application’s latency sensitivity is inherent to the function of the application itself. For instance, a video-conferencing application facilitates a real-time conversation between more than one person or system. Too much latency would render the application useless. Most modern applications have one or more functions like that, which need to sense, analyze, and act on commands and data as it is created, collected, or requested.

But latency-sensitivity becomes even greater/complicated if these applications are deployed in the cloud or consumed over the Internet; hundreds or thousands of miles may be between the data’s origin/destination and its processing.

Example Latency-Sensitive Cloud Applications

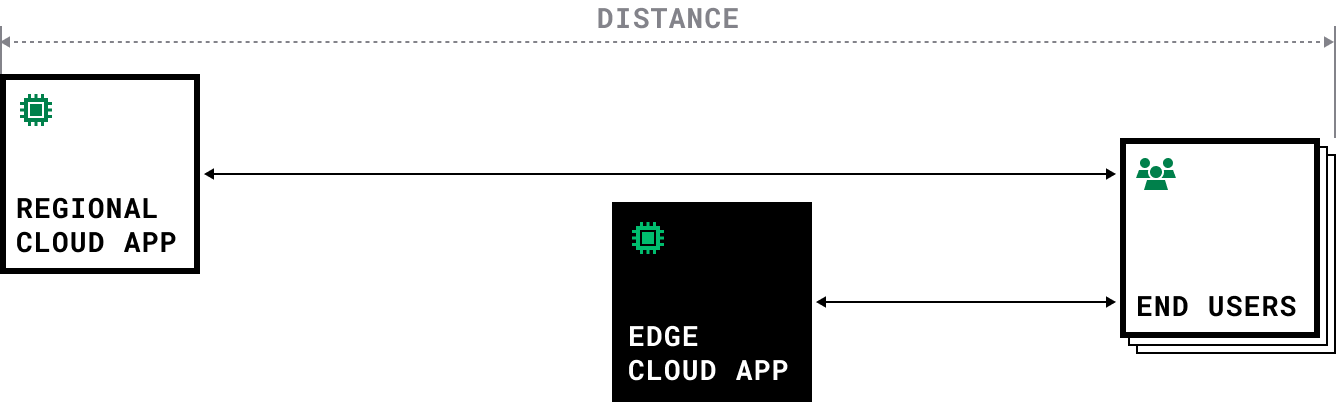

Media Streaming & Delivery

Services like video streaming, audio streaming (including music, podcasts, and audiobooks), or live broadcasting rely on continuous data delivery. High latency can result in buffering, where the media content pauses frequently to allow data to catch up. This interrupts the user experience and can lead to frustration.

Digital/Cloud Gaming

With the rise of cloud-based video games, reducing latency has become critical. Input commands from players must travel to the server and back with minimal delay to ensure responsive gameplay. In multiplayer online games, even a slight delay in network communication can cause gameplay issues such as lag, jitter, or rubber-banding. Gamers require low latency to ensure quick response times to their actions, maintaining the fluidity and competitiveness of the gaming experience.

Video Conferencing

Video conferencing applications require real-time audio and video transmission between participants. High latency can lead to synchronization issues between audio and video streams, causing delays in communication and making conversations feel unnatural or disjointed.

VoIP (Voice over Internet Protocol)

Like video conferencing and live broadcasting, VoIP services rely on real-time audio transmission. Latency can introduce delays or distortions in voice communication, impacting the clarity and flow of conversation.

High-frequency Trading

High-frequency trading applications exist to complete the highest volume of transactions in the shortest time. High latency can delay the completion of transactions, reducing the overall volume. Additionally, solutions for mitigating latency often compromise data integrity, leading to transaction error or failure.

Real-Time Auctions

Applications ranging from online advertising platforms to network service platforms rely on real-time auctioning functions that instantly review and select from multiple options (bids) in response to a new request. For instance, a consumer visits a website, and the website instantaneously processes the consumer’s demographic information, conducts an auction among multiple advertisers, and then serves the consumer an ad that is most relevant to the consumer and most profitable for the website. Latency causes this function to create a lag in the consumer’s experience or compromise the efficiency/value of the auction.

Security and Safety Device Monitoring

The core functionality of systems such as fire alarms, security cameras, and access control systems—both consumer and commercial—require immediate availability, visibility, and analysis. Network latency between system devices and system monitoring or management nodes can lead to health and safety issues.

Artificial Intelligence/Machine Learning (AI/ML) Systems and Tools

Network latency directly influences the performance, efficiency, and user experience of cloud-based AI/ML deployments.

Most obviously, latency impacts AI/ML deployments that require real-time or near-real-time decision-making or user-interaction capabilities.

Such deployments include:

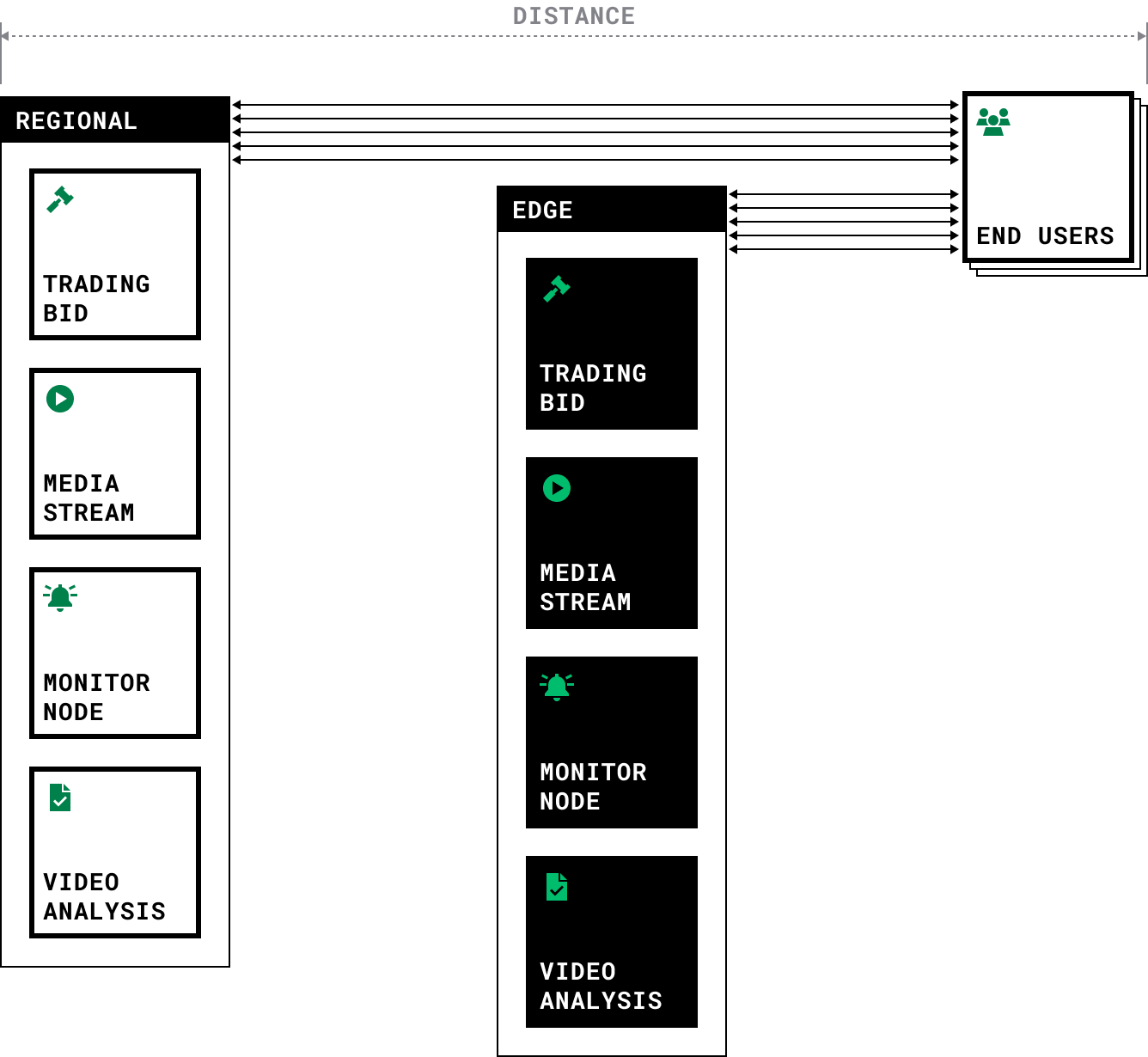

Inference Engines

Inference engines use AI/ML-trained models to predict or estimate outcomes from new observations, enabling data analytics to analyze the habits of devices and users to anticipate what a user or system will need next. Latency directly affects the speed at which the deployed AI model can make predictions or inferences. Longer latency means it takes more time for data to travel between the client and the server where the model resides, resulting in slower response times. This latency is detrimental for applications like autonomous vehicles, where split-second decisions are necessary for safety.

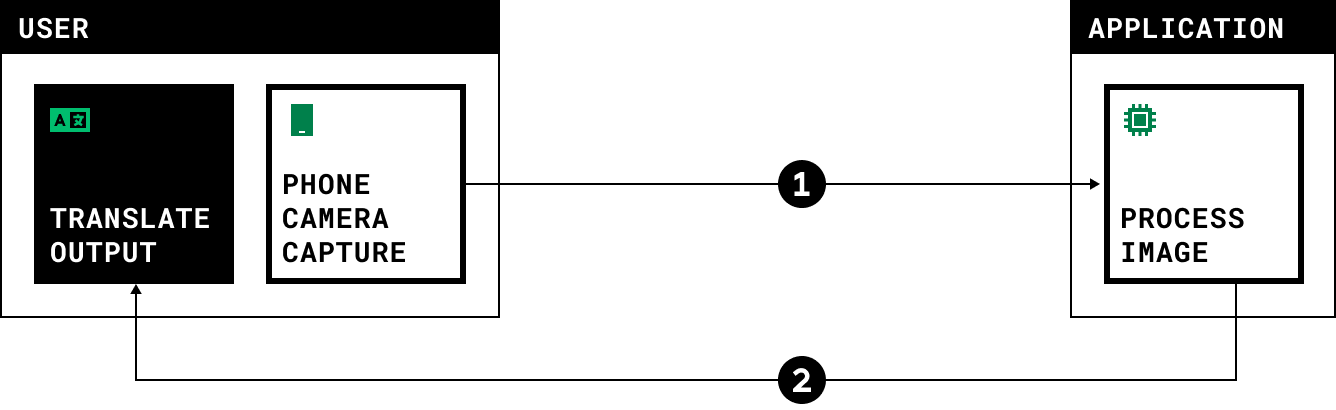

Computer Vision (Real Image Processing)

Computers and systems acquire information from digital images, videos, and other visual inputs—for example, the translation of street signs into another language or warning labels on unfamiliar materials. This information may be used in interaction with humans (such as providing a quick translation of a foreign language) or to facilitate/influence fully automated actions (such as prompting a change in autopilot direction). High latency can lead to poor user experience1 and compromised data integrity.

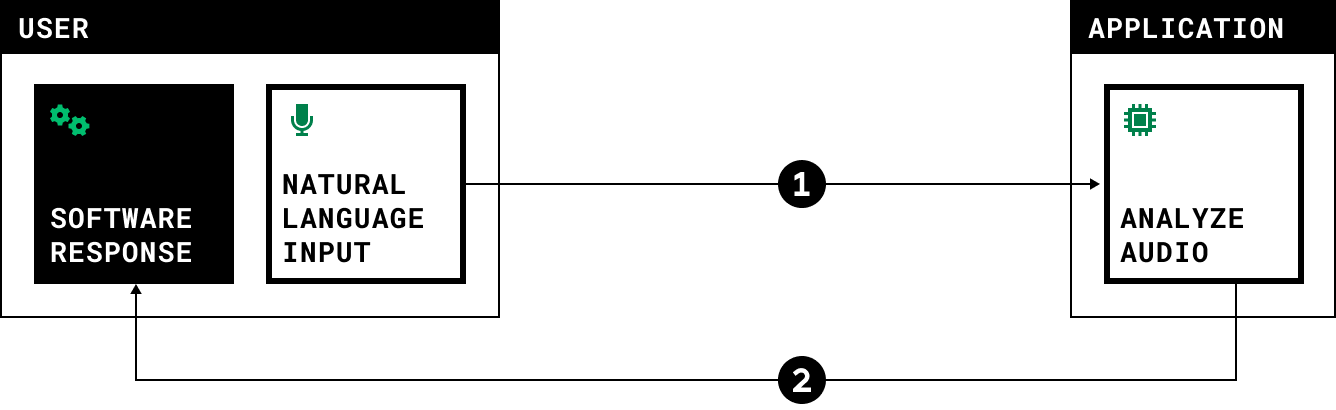

Natural Language Processing

Systems such as interactive chatbots and virtual assistants convert human-rendered language into a machine-friendly format and then process it to provide humans with helpful information and minimal frustration in interactions such as customer requests. High latency can lead to poor user experience;1 when users expect quick responses, if there is significant latency, users may perceive the system as sluggish or unresponsive, leading to frustration and disengagement.

Additionally, latency impacts AI/ML deployments where models are trained collaboratively across multiple nodes or devices. Latency affects communication between nodes and can slow down the convergence of models during training, impacting the overall efficiency of the learning process. It can also affect data synchronization and consistency; in scenarios where multiple instances of a model serve requests simultaneously, ensuring consistency across these instances despite latency variations becomes a challenge.

Minimizing latency through optimized network infrastructure, efficient communication protocols, and distributed computing techniques is crucial for maximizing the effectiveness of these applications. Providing a low, consistent speed of AI/ML-powered interactions, analysis, and tools will have a tremendous positive impact on the experience and usability of the application. Further, minimizing latency can reduce the computational resources needed to handle incoming requests, as faster responses mean each instance can handle more requests per unit of time, leading to cost savings and resource optimization.

Mitigating Latency with Edge Computing

Indeed, when cloud computing emerged, businesses had several concerns about moving their workloads to the cloud. These concerns included processing power, availability, accessibility, flexibility, security, cost, and the latency introduced by having the Internet between the business and its infrastructure.

The maturation of serving and networking hardware, the transformation of application architectures, and the evolution of development and operations strategies have mitigated many of those concerns. However, they cannot change the physics of how fast data can travel. Latency created by distance remains the most significant obstacle to moving workloads from on-premise or on-device to the cloud.

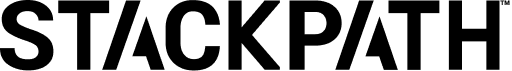

The fundamental difference between edge computing and traditional cloud computing makes edge computing a powerful IaaS solution for latency-sensitive applications. Unlike conventional cloud computing, where data processing occurs in a limited number of centralized, hyperscale data centers, edge computing leverages servers, gateways, and devices deployed in as many relevant locations as possible. Computation and data storage can occur closer to where data is generated or consumed.

Key Characteristics

Proximity to Data Sources

Edge computing minimizes the physical distance between the computation resources and the data sources, reducing latency and network hops.

Decentralization

Computation is distributed across a network of devices, preventing reliance on a single central server.

Real-time Processing

Enables real-time data analysis and decision-making at the2 network’s edge.

Scalability

Edge computing systems can scale horizontally by adding more edge devices, providing flexibility in resource allocation.

Key Components

Devices

Devices such as smartphones, computers, TVs, gaming consoles, sensors, cameras, and IoT devices that generate data.

Edge Locations

Localized data centers that handle processing and storage closer to the edge devices physically located at Internet Exchanges.

Internet Exchanges

Common grounds of IP networking, allowing participant Internet service providers to exchange data destined for their respective networks.3

Key Advantages

Improved User Experience and Engagement

Edge computing improves and protects end-user experience and engagement. Processing and storing data physically closer to the end user reduces round trip times, reducing load or operation times. It also reduces the points of failure between the workload and the end user, reducing application hang-ups and failures. Altogether, end-user experience is improved, and usage/consumption of the workload is less encumbered, even if the workload is robust and performance-heavy.

Higher Bandwidth Efficiency and Cost Effectiveness

Edge computing helps optimize bandwidth usage by processing data locally and transmitting only relevant information to centralized data centers. This not only reduces the load on network infrastructure but also minimizes data transfer costs, making it a cost-effective solution.

Improved Security Posture

Decentralized processing at the edge enhances security by limiting the exposure of sensitive data to centralized cloud servers during transit. This approach reduces the attack surface and mitigates the risk of4 data breaches, addressing security and privacy concerns inherent in centralized cloud architectures.

Increased Scalability and Flexibility

Edge computing offers scalability by allowing organizations to distribute computation across a network of edge devices. This decentralized approach provides flexibility in resource allocation, enabling organizations to adapt to changing workloads and requirements without relying solely on centralized data centers.

Higher Infrastructure Cost Efficiency

Edge computing contributes to cost efficiency by reducing the need for large-scale data center infrastructure. Organizations can leverage existing edge devices and gateways, optimizing resource utilization and minimizing the costs of maintaining extensive data center facilities.

Improved Reliability

The distributed nature of edge computing enhances system reliability. In traditional cloud architectures, a single point of failure can disrupt entire services. With its decentralized approach, edge computing reduces the impact of individual device failures, contributing to increased overall system reliability.

Edge Computing is available now at StackPath

StackPath is made for applications requiring such low latency that their computing resources must be as close as possible to the sources or destinations of their data. Our locations are within major metro areas (as opposed to rural areas), with the fewest milliseconds of latency to as many users and businesses as possible. More places. Closer to data. Easier to use. For higher overall performance, greater access and insight, and lower total cloud costs.

To learn more about how StackPath can help you with low-latency cloud applications, visit the rest of our website or contact our team today.

References